Streaming Engine to 5k+ Users (4) - Shifting to Capacity Test

Test Goal

Next step I test the last part of the load test. Let's do the capacity test with 5000 users CCU.

Conclusion

The system can now handle 5000 users CCU within 5 mins and hold for another 5 mins. All of them will continuously receive message through websocket.

If you are interested in the testing process, please continue reading.

Capacity Test Round

export const options = {

stages: [

{ duration: '5m', target: 5000 }, // 5 minutes ramp-up to 5000 VU

{ duration: '5m', target: 5000 }, // Maintain 5000 users stable (quiet period)

{ duration: '30s', target: 0 }, // Test end, graceful shutdown within 30 seconds

],

};

Observations

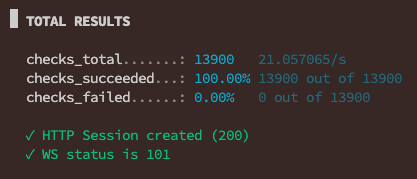

1. K6 Result ✅

The test was 100% successful. No failed requests.

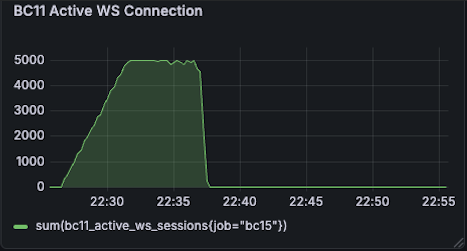

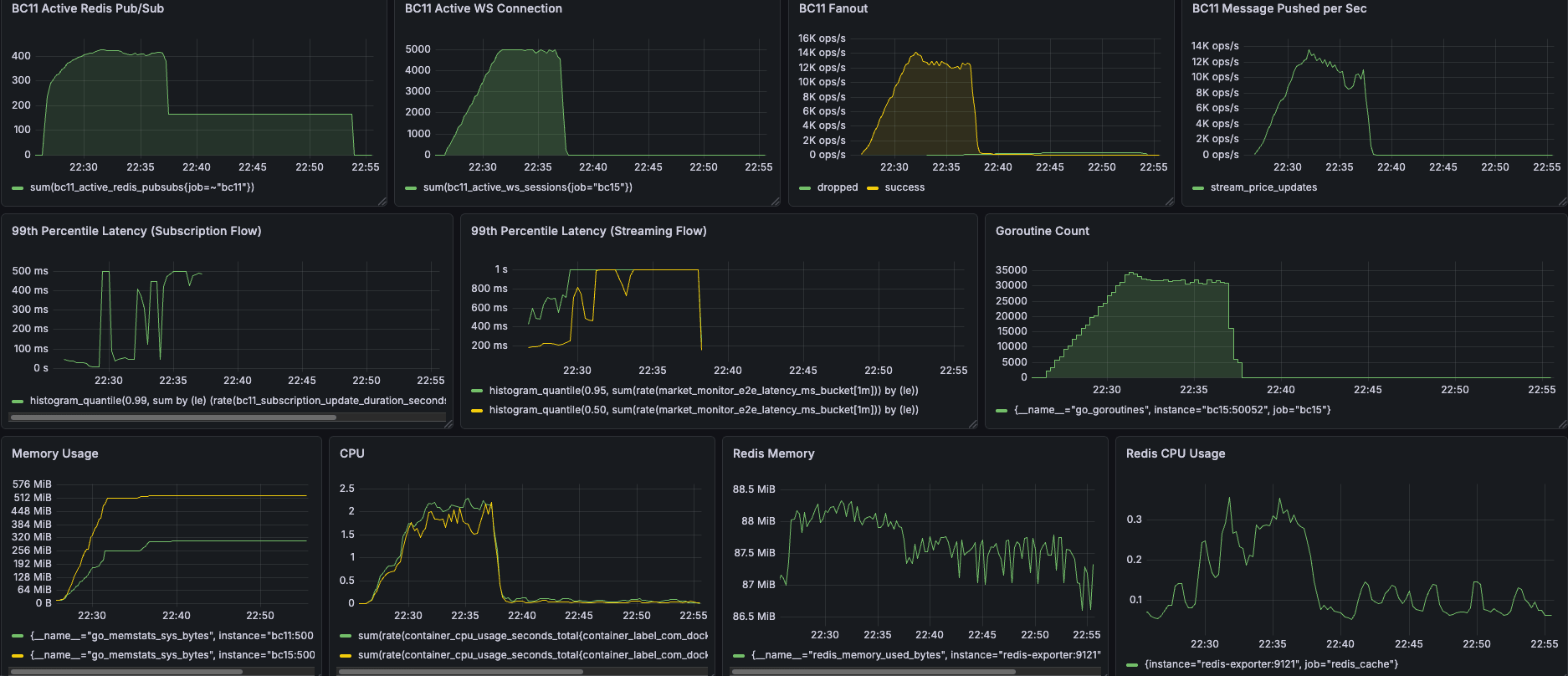

2. BC11 Active WS Connection ✅

The green line climbed slowly and precisely stopped at 5000, maintaining stability for 5 minutes, and then dropped vertically to zero instantly. This proves that your k6 script executed exactly as planned, and the server perfectly handled these 5000 long connections.

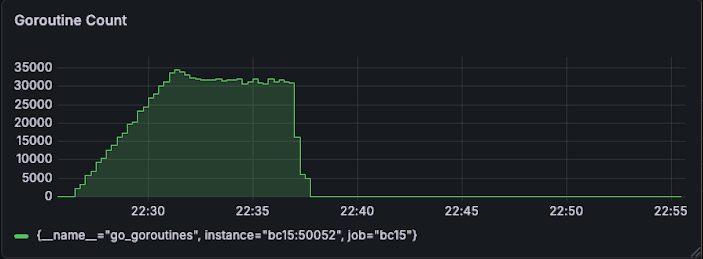

3. Goroutine Count ✅

After the test ended, all Goroutines were zero leaked and instantly recycled.

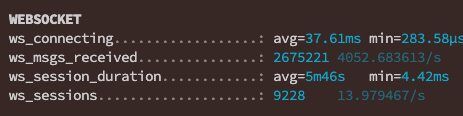

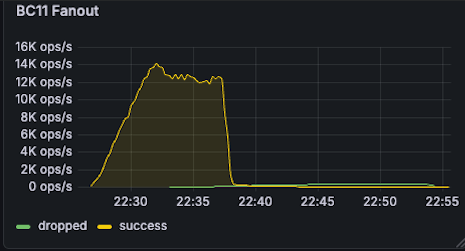

4. Messages / Fanout ✅

These people received nearly 2.6+ million (ws_msgs_received) real-time stock price updates within 10 minutes! Your server's Fanout peak reached 12K ~ 14K ops/s (processing 14,000 broadcasts per second).

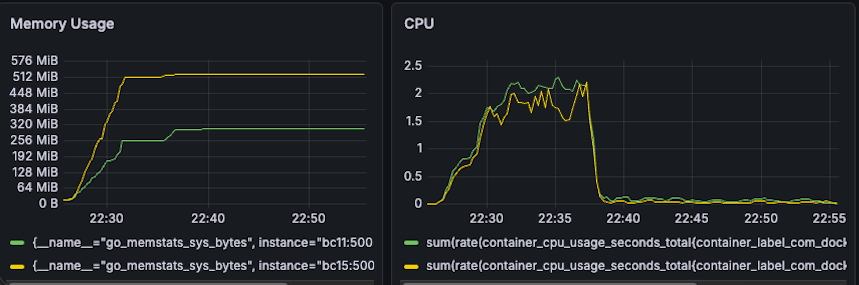

5. CPU & Memory ✅

Memory: The Go memory pool was like a standard textbook (ramp-up -> enter stable plateau period -> maintain available water level after test end). No Memory Leak.

CPU: The peak was approximately 2.5 cores. Using 2.5 CPUs to handle 5000 users and processing thousands of broadcasts per second is extremely efficient!

Result

After a series of thorough underlying Goroutine lifecycle and parallel lock reconstruction, our push engine finally faced the ultimate test: 5000 CCU capacity test (Capacity Test).

In this test, the system not only perfectly maintained 5000 active WebSocket connections but also successfully distributed 2.6+ million real-time price update messages within a few minutes, with a peak broadcast rate of 14,000 ops/sec. The most exciting part was that when 5000 users simultaneously disconnected, the system resources were precisely recycled like a textbook, with more than 30,000 Goroutines instantly returning to zero, completely eliminating Memory/Goroutine Leak. This proves that our architecture not only has high concurrent processing capabilities but also has extremely stable and scalable capabilities.

Until now, both spike test and capacity test are successful. Let's shift to the next article to see if there are any other issues or performance tuning opportunities.